Two years ago I quit my job and went back to indie dev life. With that newfound freedom, and (if I’m being honest) a lack of clear focus, I ended up starting a lot of new projects - an Unreal Engine Plugin, a Node Graph Programming Tool, a New Meshmixer prototype, yet another geometry library, a new kind of 3D shape representation, and a mesh serialization/IPC library, among others. All solidly in my traditional personal-project-wheelhouse, built using tech I have a deep understanding of, with minimal dependencies.

However, in the ~2 months since I started using the the Magic Coding Robots, I’ve created more new projects than I did in that entire 2 years. These projects range from throw-away prototypes to pretty solid working software that I now use every day. A common thread between all of them is that they are built using technologies I previously had little to no experience with. The list is … substantial:

- Node.js server with Vite web development environment

- Astro website framework

- Three.js and Babylon.js web 3D frameworks

- React and SolidJS web UI frameworks

- Konva 2D canvas library

- Jolt Physics Engine

- Polyscope Geometry Viewer

- Meta SAM V3 image segmentation model

- meshoptimizer.js mesh simplifier

- Blender and Godot scripting APIs

- NPM packages for PDF rendering, Zip read/write, HTML-to-Markdown conversion, image processing, browser local storage, DOM sanitization, ffmpeg video & gif processing, Google Sheets API, Amazon AWS, and many more

This is an absolutely ridiculous amount of stuff to integrate, for me. And none of this was for my consulting work or main gradientspace projects - this was all for “side quests” of one kind or another, ie evening-and-weekend time where I would normally avoid messy dependencies. Because frankly, learning to use a new library is not something I consider “fun”.

Of course, I didn’t do the integration, Claude Code did. I don’t know much more about the actual APIs to these libraries than I did a month ago. In some cases I know a little bit now, because I had to work through design decisions or resolve issues, and I learned a bit along the way. But mostly, these are still black-boxes to me.

And that’s perfect, as far as I’m concerned. I used Jolt because it was easier than PhysX to get the code working on my Macbook Air. My problem was that I needed to collide some spheres with a mesh. At that level, either option would do. I don’t actually care about the differences between Three.js and Babylon.js. My only requirement was “see some 3D models in a webpage”.

As a 3D software developer & researcher, I basically know how these packages have to work under the hood. But at the API level, their respective developers made arbitrary choices about how to configure a light or represent a mesh or capture the mouse. If I really wanted to maximally optimize my code, those choices would be consequential, and might lead me to choose one over the other. But at the level of “I want to experiment with an idea”, all those API-level differences are massive blockers to me. To the point where most ideas I have were simply intractable to try out on a reasonable time-frame.

Complexity & Bullshit

I could have sat down and learned enough about these different libraries and systems and frameworks to implement these projects “by hand”. This would have involved sorting out an enormous amount of bullshit. I mean that in the technical sense - bullshit like, the mouse events come in a slightly different order on OSX vs Windows, or Library A uses SRGB colors and Library B uses Linear, or one of thousands of other complications that would each waste my time for a few hours while I figured it out.

This is what so much of software development is today - dealing with all the bullshit. And the wound is self-inflicted! We, as programmers, literally created the bullshit. Not necessarily intentionally (although sometimes it really seems…). It’s just unavoidable when building inside absurdely complex modern software ecosystems. When you are writing a library or building a system, you have to choose some convention for color space or up axis or winding order or … (you get the idea). Sometimes there are “industry standards” - usually at least 2 or 3, that are mutually incompatible. Mostly it’s just individual choices that you, the developer, get to inflict on future generations of programmers.

If you are, say, a university professor or a highly specialized engineer, you might not spend a lot of time in the deep end of the bullshit pool. You are in the coding 1%, where you get to mostly write new code from scratch, and get to say things like “I decided to make a header-only library with no dependencies”. But your average working SWE is literally drowning in bullshit software complexity. And this unintentional complexity is why so much software is janky and broken, and takes so long and costs so much to develop.

The Robots Are Here To Save Us

The thing about the software bullshit I’m describing is that none of it is particularly difficult or challenging in the fun way. 99% of the time it’s just about knowing where the landmines and footguns are hiding. Knowing the right order to call poorly-named and undocumented functions, the right config file to edit, the right —arg syntax. You can always find a stack overflow post if you burn enough hours digging.

The Coding Robots do not have this problem. They do not have to spend time trying to deciper old forum threads and github issues and faulty documentation. They have all this information instantly at hand, and are more than happy to wade through the bullshit for you.

There are legitimate concerns about relying too much on the Robots. We probably should not be having them design entire systems that no human has ever looked at, and handing over the keys. We probably shouldn’t forget (or never learn) how to actually program computers. But for this problem of getting Thing A to talk to Thing B, I don’t think those arguments apply. A whole class of problems that humans previously spent an enormous amount of time on - basically to compensate for our own collective blunders - is now trivial and automatic.

If this wasn’t all under the banner of “AI”, I have a hard time imagining programmers wouldn’t welcome this capability. A machine that cuts the Gordian Knot of API complexity. But of course the reality is that today a substantial fraction of software jobs are basically bullshit-complexity-management. And if the bullshit-complexity problem goes away … maybe so will all our jobs?

The Robot can’t Invent for you

I use my Coding Robot like it’s a highly capable but deeply unimaginative assistant - I look at the current version (usually at the UI level, sometimes spelunking into the code to skim through high-level architecture or APIs) and I say “let’s change X”. I don’t let it decide anything that might be user-facing or systemically important. I find I get the best results when we go step-by-step, rather than biting off too big a chunk at once. I have the ideas, the Robot does the bullshit. We have made some legitimately good things this way, incredibly quickly.

But I did recently make a short visit over to the dark side, and try one of these “orchestration” systems where I wrote a detailed Spec and had a team of “agents” go to town on it. The Spec was for an in-browser 3D fighting game with a networked client/server based on websockets (something I have absolutely never done before). And it did work!! The agents implemented something that satisfied the Spec. It was franky very impressive, that it could “one shot” such a (relatively) complex system. But also, the result was deeply mediocre - it was generic, janky, and ugly (in every sense).

Maybe if I went back and spent 5 times as long on the Spec, it would have done better. But (a) that is boring and (b) there is no way I could have known what needed to change before I saw the shitty version. So it served a purpose. But I went back to step-by-step instructions to evolve that first pass into something that was starting to approach good.

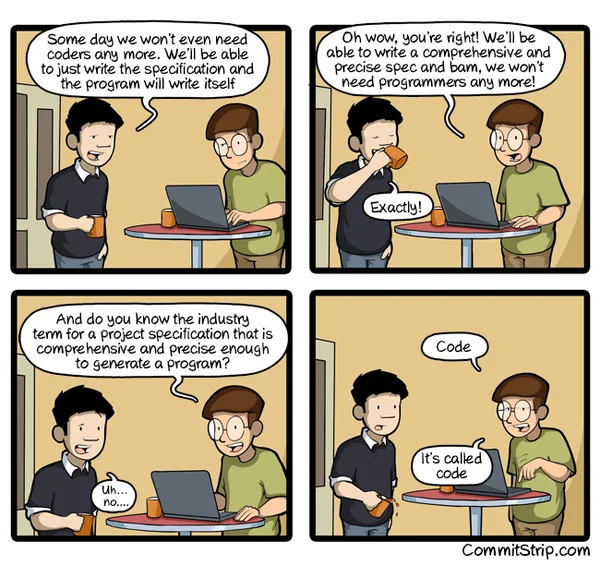

There is an infinite-monkeys effect where yes, sometimes, the agent swarm does something good. But it’s not consistent and it never will be, because creating a specification precise enough to rule out all ambiguity is more work than just writing the software (see comic). And anywhere there is ambiguity, you are going to get a mediocre, generic solution. We’re already seeing this in the tendency for the Robot to select the “standard” library - Node over Bun, Three over Babylon, etc.

So (in my humble opinion) the Robots — although they are great at cutting through the bullshit — cannot, and will never be able to, Invent for you. Because Invention does not happen without motivation, and the Robots don’t have any motivation - it’s why they have to be prompted! They don’t care if the software is good, because they have no conception of “good”. And “good” is context-dependent. good technical software has completely different rules than a good consumer app. So you’re always going to need someone telling the Robot what is good.

Ultimately this means that some kind of Engineering is still required. People are saying right now that only senior, highly experienced Engineers will be needed, because those Engineers will know how guide the Robots away from architectural disasters. But “good code” is not enough for good software. You have to iterate your way to good software. You have to put in the time and care about the result and try lots of things and get a feel for the shape of good-ness. That’s actually still a lot of work, and a single person can only hold so much in their head. And even though there is so much software out there already, there is much more to come.

So I’m not really convinced that Engineers are obsolete, now. The Job will change. We’ll spend less time on Bullshit and more time figuring out what is Good. It sounds pretty awesome to me, to be honest…